The Elder Scrolls IV: Oblivion

The Elder Scrolls IV: Oblivion

The Elder Scrolls V: Skyrim

The Elder Scrolls V: Skyrim

The Elder Scrolls: Online

The Elder Scrolls: Online

Fallout: New Vegas

Fallout: New Vegas

Fallout 4

Fallout 4

Fallout 76

Fallout 76

Mount & Blade: Warband

Mount & Blade: Warband

Mount & Blade II: Bannerlord

Mount & Blade II: Bannerlord

Kenshi

Kenshi

The Witcher 3: Wild Hunt

The Witcher 3: Wild Hunt

Cyberpunk 2077

Cyberpunk 2077

Kingdom Come: Deliverance

Kingdom Come: Deliverance

Minecraft

Minecraft

Crusader Kings 2

Crusader Kings 2

Crusader Kings 3

Crusader Kings 3

Hearts of Iron IV

Hearts of Iron IV

Stellaris

Stellaris

Cities: Skylines

Cities: Skylines

Cities: Skylines II

Cities: Skylines II

Prison Architect

Prison Architect

RimWorld

RimWorld

Euro Truck Simulator 2

Euro Truck Simulator 2

American Truck Simulator

American Truck Simulator

Microsoft Flight Simulator 2020

Microsoft Flight Simulator 2020

Farming Simulator 17

Farming Simulator 17

Farming Simulator 19

Farming Simulator 19

Spintires и Spintires: MudRunner

Spintires и Spintires: MudRunner

BeamNG.drive

BeamNG.drive

My Summer Car

My Summer Car

My Winter Car

My Winter Car

OMSI 2

OMSI 2

Grand Theft Auto: V

Grand Theft Auto: V

Red Dead Redemption 2

Red Dead Redemption 2

Mafia 2

Mafia 2

Stormworks: Build and Rescue

Stormworks: Build and Rescue

Atomic Heart

Atomic Heart

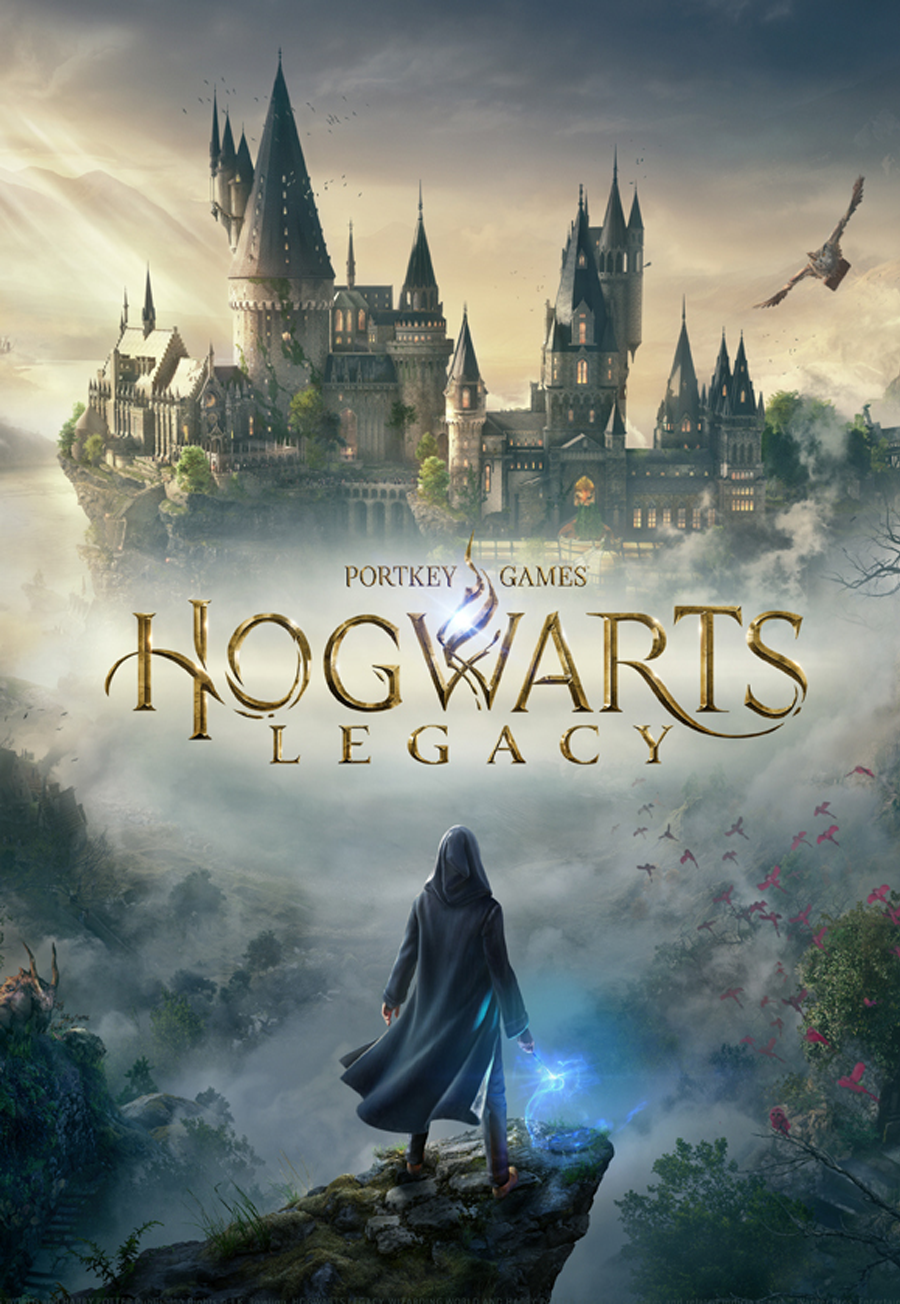

Hogwarts Legacy

Hogwarts Legacy

First, . Traditional DP assumes the Markov property: the future depends only on the present. With AdvFs, we can encode sufficient statistics of history into an augmented state space. For example, a value function that includes a belief state (in a Partially Observable MDP) allows DP to solve problems with hidden information—a notoriously difficult class.

In the landscape of computational problem-solving, few paradigms balance mathematical elegance with raw practical power as effectively as Dynamic Programming (DP). At its core, DP is a method for solving complex problems by breaking them down into simpler subproblems, storing the results to avoid redundant computation. However, when DP is elevated to interact with what we term "Advanced Value Functions" (AdvF)—sophisticated metrics that assess the long-term utility of states or decisions—it transforms from a mere algorithmic trick into a philosophical framework for decision-making under uncertainty. This essay explores how the marriage of DP and AdvF creates a robust architecture for reasoning about optimization, learning, and intelligent behavior. The Foundation: From Recursion to Value Classic dynamic programming, as formalized by Richard Bellman in the 1950s, rests on the principle of optimality: an optimal policy has the property that, whatever the initial state and decision are, the remaining decisions must constitute an optimal policy with regard to the state resulting from the first decision. This recursive decomposition is powerful, but naive implementation leads to exponential time complexity. DP solves this through memoization or tabulation , effectively trading space for time.

Another domain is financial portfolio optimization. State space (wealth, market conditions) is huge. An AdvF that encodes risk-adjusted return (e.g., Sharpe ratio or downside risk) can be updated via DP backward induction, producing an optimal rebalancing strategy over time—something traditional mean-variance optimization fails to capture dynamically.