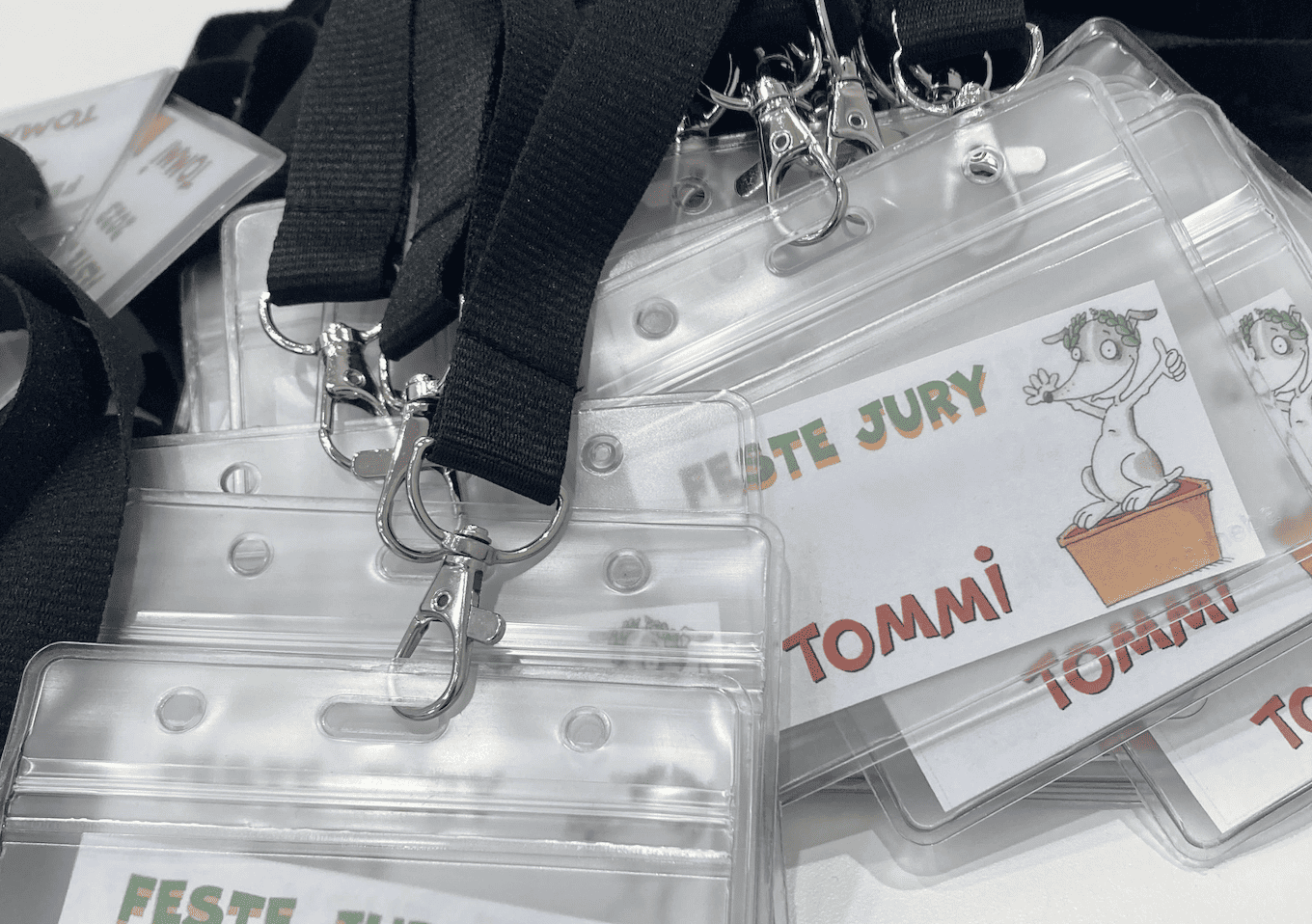

Kindersoftwarepreis 2025

Patch Mpt ~repack~ -

If you meant something else (ECU patch, firmware, audio plugin), let me know. Context: MPT (Modified Transformer) uses ALiBi or Rotary embeddings. This patch fixes rotary position cache invalidation and attention mask expansion for variable-length sequences in a custom MPT block.

# Test attention mask expansion mask_2d = torch.tensor([[0, 0, 1, 1]]) # batch=1, key_len=4 expanded = patch_attention_mask(mask_2d, query_len=3, key_len=4, dtype=torch.float32) print(f"Expanded mask shape: expanded.shape") # (1,1,3,4) print(expanded) | Issue | Before patch | After patch | |-------|--------------|--------------| | Rotary cache | Recomputes every call, wastes memory | Only recomputes when seqlen changes | | Mask expansion | Only supports 2D masks | Supports 2D/3D/4D, correct broadcast | | Cross-attention | Mask shape mismatch | Proper (batch,1,q_len,k_len) | If you meant a firmware patch for an MPT controller (like in automotive or industrial PLCs), I can write a .bin patching script in Python or C. Just clarify the target. patch mpt

# Case: (batch, 1, key_len) elif attention_mask.dim() == 3 and attention_mask.size(1) == 1: mask = attention_mask[:, :, None, :] else: raise ValueError(f"Unexpected mask shape: attention_mask.shape") If you meant something else (ECU patch, firmware,